Navigating the EU AI Act

The EU AI Act is here, and it’s already reshaping how businesses think and act on artificial intelligence. The EU AI Act calls for not just compliance, but a real shift in how leaders build, deploy, and trust AI in their organisations.

Why the EU AI Act Matters Now

The Act isn’t just for European companies. Whether a business operates in Berlin or Bangalore, if clients or users are in Europe, the rules apply. The headlines you’re seeing about “risk-based AI regulation” are no exaggeration: fines can reach €35 million or 7% of global turnover. But there’s more at stake than avoiding penalties—this Act could define your brand’s reputation and market edge for years to come.

Key Highlights CEOs and Marketers Can’t Ignore

- Risk-based framework under EU AI Act:

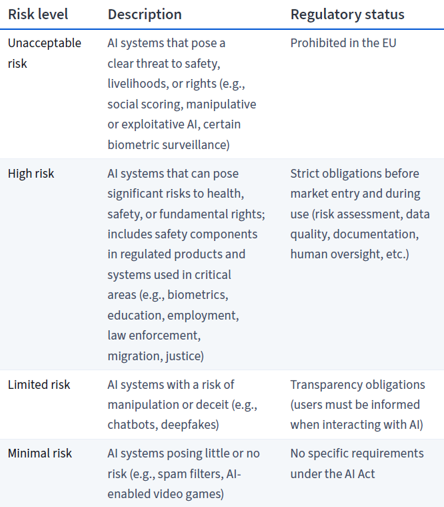

AI isn’t treated as one-size-fits-all. Instead, systems are classified by risk:

Risk levels under EU AI Act

-

AI Literacy is Mandatory

Every organisation using AI must ensure staff have a “sufficient level of AI literacy.” The AI Act defines “AI literacy” as the “skills, knowledge and understanding that allow providers, deployers and affected persons, taking into account their respective rights and obligations in the context of this Regulation, to make an informed deployment of AI systems, as well as to gain awareness about the opportunities and risks of AI and possible harm it can cause.” That means real understanding, not just technical skills, but also ethical and practical know-how. It’s explicit in Article 4, effective now, enforced from August 2025.

-

EU AI- Not just a compliance checkbox:

While direct penalties for poor AI literacy aren’t defined, evidence of staff training and awareness is expected—especially if something goes wrong. Think of it as brand and legal insurance.

What Realistic CEOs and Marketers Should Do

Plenty of advice floats around, but let’s zero in on how to turn the Act into a source of resilience and opportunity. Here’s a cheat sheet:

1. Build AI Governance from the Top Down

- Form a cross-functional team (business leads, IT, legal, HR) to own AI governance and policies.

- Secure visible buy-in and participation from C-suite and board; the tone from the top drives adoption.

- Regularly update your principles and employee guidelines to reflect both risk and market priorities.

2. Get Clear on AI Inventory and Risks

- Map all AI use—both official systems and “shadow AI” tools employees are using.

- Classify by risk tier; align training and oversight accordingly.

- Don’t neglect “low-risk” systems, as requirements may evolve.

3. Make AI Training Real, Not Box-Ticking

- Tailor learning for different teams using bulletproof, role-specific examples (the legal team needs different guidance from your digital marketers).

- Use formats that people actually engage with: live workshops, on-demand modules, even hands-on tools and promptathons.

- Recognise and credential progress—employees value it, and so do regulators.

4. Document, Track, and Share Learning

- Maintain a simple but robust log of who’s trained, on what, and when. Tie training to business outcomes—adoption rates, reduced risk, new AI-powered wins.

- Share success stories; make learning visible and part of the culture.

5. Commit to Continuous Review

- Schedule updates—in tech, “set and forget” is a recipe for obsolescence. Annual refreshes for high-risk AI, at minimum.

- Set triggers for big tech, regulatory, or business shifts. Proactive is always better than reactive.

An Opportunity for Public-Private Partnership

Many publicly available examples of AI literacy in action come from the private sector, but these insights become most valuable when adapted through collaborative exchanges with public institutions.

Importance of Transparency, Implementation and Risk Management under the EU Act

As Michal Kanownik of the Związek Cyfrowa Polska / Digital Poland Association observes: “Partnership between public and private institutions is crucial. The public sector has more knowledge about legislation and articles the private sector can add technical and business knowledge of AI”. A combination of regulatory understanding and technical know-how creates highly effective approaches to AI literacy that serve both public interests and business objectives while meeting the requirements of the EU AI Act.

The Takeaway

Thinking ahead. That’s the real reward here: the brands that invest in practical AI literacy, transparent policies, and ongoing upskilling won’t just comply—they’ll compete better, innovate with less risk, and earn more trust from users and partners.

In a nutshell: the EU AI Act is not just Europe’s wake-up call; it’s a global nudge for all of us to get real about AI responsibility and readiness. If CEOs and marketers lead from the front (not from the sidelines), this could become a competitive advantage—rather than a compliance headache—by 2026.

Source: Navigating the EU AI Act by Coursera

-

FAQ’s Section

1. What is the EU AI Act?

The EU AI Act is a risk-based regulation that classifies AI systems by their potential harm and sets compliance requirements for businesses worldwide that serve European users. Fines can reach €35 million or 7% of global turnover, making it critical for global brands beyond just European companies.

2. Does the EU AI Act apply outside Europe?

Yes, the EU AI Act applies to any business with clients or users in Europe, regardless of location, including companies in places like Bangalore. It affects global operations because non-compliance risks massive fines and reputational damage in European markets.

3. What is AI literacy under the EU AI Act?

AI literacy under the EU AI Act means providing staff with skills, knowledge, and understanding to deploy AI responsibly, including awareness of opportunities, risks, and potential harm. It’s mandatory for organizations using AI, with Article 4 effective now and enforcement starting August 2025.

4. How should businesses prepare for EU AI Act compliance?

Businesses should build top-down AI governance with cross-functional teams, map all AI tools by risk level, deliver role-specific training, document progress, and commit to regular reviews. This turns compliance into a competitive edge through better innovation, reduced risk, and increased trust.

5. Why partner the public and private sectors for the EU AI Act?

Public-private partnerships combine regulatory knowledge from government with technical and business AI expertise from companies, creating effective AI literacy approaches. As noted by the Digital Poland Association, this collaboration meets EU AI Act requirements while serving both public interests and business goals.

Really insightful breakdown of the EU AI Act! It’s encouraging to see legislation trying to balance innovation with accountability. The biggest challenge, in my view, will be ensuring compliance without stifling startups and smaller players. Excited to see how businesses adapt to these new guardrails.